The Wheeler–Gleason Test, Reimagined:

For most of the history of photojournalism, ethical image manipulation was a relatively stable concept. Photographers captured reality through a lens, editors performed minor technical adjustments, and audiences trusted that news images reflected the world as it truly was. But the rise of generative AI has upended this foundation. Today, images can be created without cameras, altered without detection, and circulated globally within seconds. Visual truth—once the bedrock of journalism—is now increasingly fragile.

As a young professor, I taught the work of Tom Wheeler and Tim Gleason from the University of Oregon. It helps to revisit a classic of media ethics: the Wheeler–Gleason Test (Wheeler, 2002), which has long been used to determine whether a news photograph was ethically edited. Yet as we move deeper into the AI era, this test must evolve. Below is a narrative exploration of the original framework and its modernized successor.

The Classic Wheeler–Gleason Test: Built for a Camera‑Based World

Media ethicists Tom Wheeler and Tim Gleason designed their test as a practical method for deciding whether a news photograph had been manipulated in ways that compromised truthfulness. Their framework depended on four questions that every edited photo was expected to pass.

Viewfinder Test: Did the final image show what the photographer actually saw when capturing the moment? In effect, is the image presented to the audience the same as what the photographer saw in the viewfinder? If major elements were added, removed, or rearranged, the image failed.

Photo Processing Test, which permitted basic, technical edits—cropping, exposure adjustments, color correction—as long as they didn’t alter meaning.

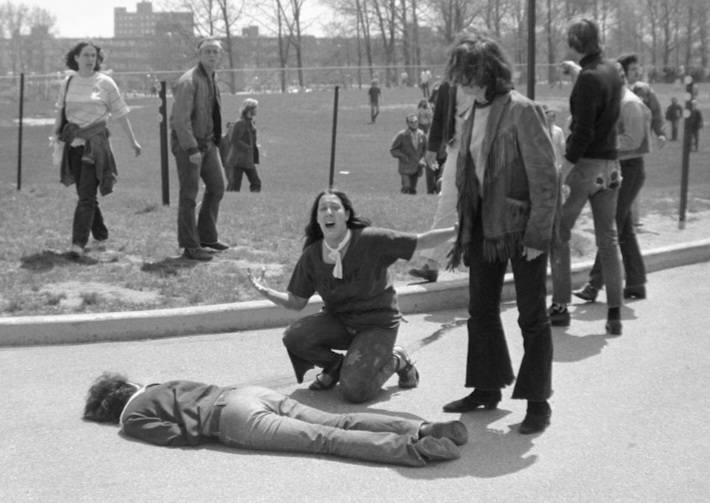

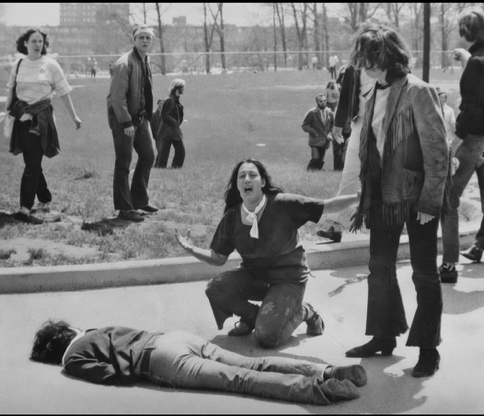

This iconic news image from Kent State shows a student shot. The original image had a fence post right behind a female student’s head. The later republished image removed the fence. This alteration goes beyond what could have been done in a photo developing studio, but does it change the image’s message? Perhaps, if there were a discussion of how the students moved across campus, but otherwise, no.

Technical Credibility Test asked whether an ordinary viewer could tell the image had been altered for effect. If the manipulation was obvious or created an unrealistic enhancement, credibility was compromised.

OJ Simpson was featured on the cover of Time and Newsweek. The same image gave distinctly different feels. If the two images had not been published at the same time, the public may have been given a misinterpretation. These images were altered through normal photo developing but left a distinctly different feel of the subject.

Clear Implausibility Test drew a line around the impossible: if the image contained something that could not occur in reality, it was ethically unfit for news. At the same time, the photo-illustration of a giant squid attacking the Golden Gate Bridge may be seen as clearly implausible.

For many years, these four standards served journalists well. They were grounded in a world where every news image was anchored to a real event and a real camera. Yet that world has changed.

Why the Original Test Isn’t Enough Anymore

The Wheeler–Gleason Test was assumed to remain, but it was built in an era that assumed photographs were captured. Today, many images are generated. As your material notes, the classic test “assumes a camera captured reality,” an assumption that no longer holds.

Images can now be created without cameras, altered invisibly, and synthesized so convincingly that even experts struggle to distinguish AI-generated visuals from authentic ones. In a landscape where deepfakes drive misinformation and realistic fabrications can influence public opinion, financial markets, or political narratives, ethical guidelines require more nuance.

This leads us to a modernized rethinking of Wheeler and Gleason’s work—one better suited to an era of synthetic media.

The Modernized Wheeler Test: Ethics for Synthetic Reality

The updated framework expands the original four questions to include three additional principles designed to handle AI-generated and AI‑altered images. These are not mere updates—they represent a shift in understanding what images are.

1. The Reality Anchor Test

The first question is whether the image claims to depict real people, events, or conditions. If it does, it must meet strict truth‑preservation standards. If it does not—say, it’s labeled “AI-generated” or “conceptual”—creative freedom is acceptable. In the AI era, transparency is no longer optional. Audiences deserve to know when an image has been generated or meaningfully altered. Undisclosed AI involvement constitutes deception.

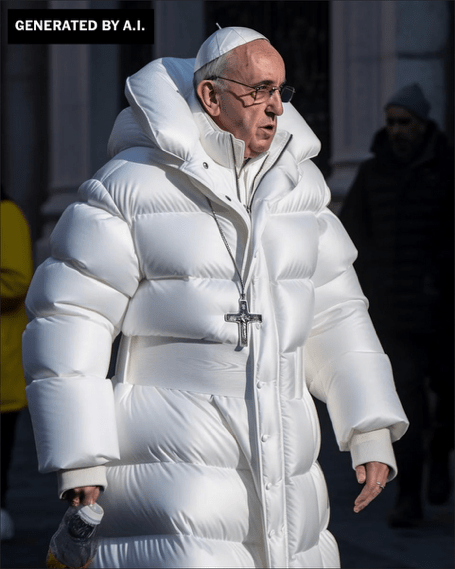

An AI-generated image that could be mistaken for real. The AI-generated “Pope in a Puffer Jacket” fooled millions because it appeared plausible yet lacked disclosure, failing both the Reality Anchor and disclosure standards. AI images can place people and events out of context. As an illustration, it might not mislead but as a news item or social media post, it could actively decieve.

2. The Manipulation Impact Test

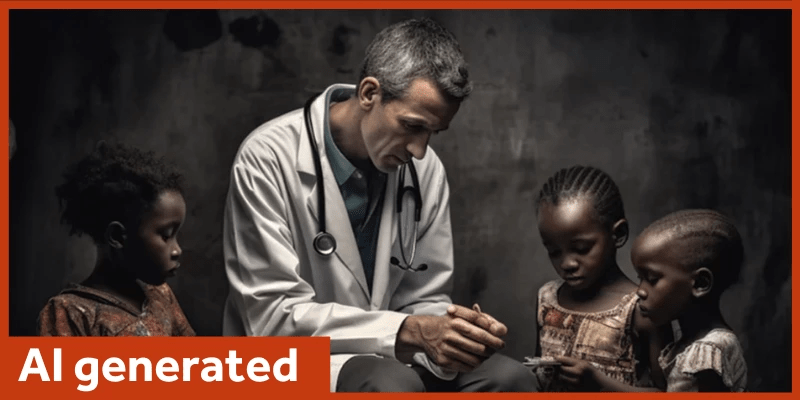

Even subtle edits can change interpretation. If manipulation significantly affects meaning, emotional tone, or factual understanding, disclosure becomes essential—or the edit must be avoided. AI systems can amplify racial, gender, and cultural biases. Ethically, image creators must avoid manipulations that reinforce stereotypes or misrepresent individuals or groups.

The white medical doctor servicing African children reinforces a stereotype studied by Alenichev, A., Kingori, P., & Grietens, K. P. (2023). AI-generated images and highlighted the bias that can arise from them. Illustrators who use AI images must be careful to avoid bias created by AI shortcuts. Sometimes these manipulations are intentional, some are accidental, and others arise from the AI’s programming.

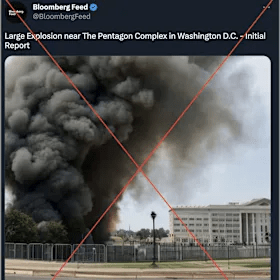

The fabricated Pentagon Explosion image briefly affected financial markets, demonstrating the consequences of synthetic misinformation and the need for verification. Especially in light of 9/11, this image can be deeply upsetting.

3. The Plausibility and Verification Test

If an image is realistic enough to be mistaken for reality, it requires rigorous verification before dissemination. Deepfakes and highly plausible AI creations pose unique risks.

There are many images suggesting advancement beyond what is currently available. While it suggests the future, it may lead people to believe events are happening that are not.

Together, these principles redefine image ethics for a time when visuals no longer require lenses—and when reality itself can be manufactured on demand. This report has avoided the most damaging or libelous images, but they are out there, misleading the public.

Visual Literacy and the Potential for Harm

In an era of synthetic and highly malleable imagery, visual literacy has become a core ethical safeguard rather than a supplemental skill. Visual literacy refers not only to the ability to interpret images, but also to understand how images are produced, altered, framed, and circulated—and how those processes shape meaning and belief. As scholars of image ethics note, photographs and videos function as testimonial evidence: audiences often treat them as direct witnesses to reality rather than as mediated constructions. This implicit trust is precisely what makes manipulated or AI‑generated images so powerful—and so potentially harmful.

Harm from images does not require that audiences fully believe them. Even plausible but false visuals can distort perception, force public denials, or place individuals at risk, especially when images depict alleged criminal behavior, political action, or institutional authority. As documented in ethics research on image manipulation, being compelled to respond to or refute a false visual claim can itself constitute harm, regardless of whether the image is ultimately accepted as true. This is particularly relevant in the age of AI, where images can circulate faster than verification mechanisms can respond.

Visual harm also operates at a structural level. Image manipulation—especially when generated or amplified by AI systems—can reinforce stereotypes, misidentify individuals, or falsely associate people with controversial or dangerous roles. Teaching materials that apply the Wheeler–Gleason Test emphasize that even technically subtle changes can significantly alter audience interpretation, shifting emotional tone or implied meaning in ways viewers may not consciously recognize. When such manipulations go undisclosed, they undermine informed judgment and violate the ethical principle of transparency.

Moreover, images do not exist in isolation. Their impact depends heavily on context, distribution, and audience expectation. As visual‑ethics scholarship explains, an image that might be acceptable as illustration or speculation becomes ethically problematic when presented in a news‑like context that implies factual accuracy. In digital environments where AI‑generated images are visually indistinguishable from photographs, the burden of ethical responsibility increasingly falls on creators, editors, and platforms to provide clear disclosure—and on audiences to approach images with critical awareness.

For these reasons, visual literacy must now include the ability to question plausibility, provenance, and purpose. Audiences must ask not only “Is this image realistic?” but “What claim is this image making?” and “What assumptions am I being invited to accept?” The modernized Wheeler framework reinforces this shift by treating realism itself as an ethical risk: the more convincingly an image mimics reality, the greater the obligation to verify, contextualize, or disclose its origins. Without such literacy, the persuasive power of images can be weaponized—intentionally or not—against individuals, institutions, and democratic trust.

Conclusion

In this post, I intentionally left out some of the most damaging images to avoid offending the reader, in part to avoid liability. In part, there are practical publication issues as fake images bounce from site to site. However, it the reader looks around, they can see and apply the test proposed.

In this environment, visual literacy is no longer merely about interpretation; it is about harm prevention, ethical accountability, and the preservation of public trust in visual media. Image ethics are no longer only about manipulation—they’re about meaning, transparency, and trust. The modernized Wheeler Test provides a roadmap for preserving these values in a world where the line between real and artificial grows thinner every day.

References

Alenichev, A., Kingori, P., & Grietens, K. P. (2023). Reflections Before the Storm: The AI reproduction of biased imagery in global health visuals. The Lancet Global Health, 11(10), e1496–e1498. https://doi.org/10.1016/s2214-109x(23)00329-7

National Press Photographers Association. (n.d.). NPPA code of ethics.

https://nppa.org/resources/code-ethics

Rini, R. (2017). Fake news and partisan epistemology. Kennedy Institute of Ethics Journal, 27(2), E43–E64. https://doi.org/10.1353/ken.2017.0015

Wheeler, T. H. (2002). Phototruth or photofiction?: Ethics and media imagery in the digital age. Mahwah, NJ: Lawrence Erlbaum Associates.

https://archive.org/details/phototruthorphot0000whee